I've been asked a few times around whether or not HCX can be used if the customer has a route-based VPN into VMware Cloud on AWS and is advertising the default route 0.0.0.0/0 into the SDDC. The short answer is Yes, this is supported and works.

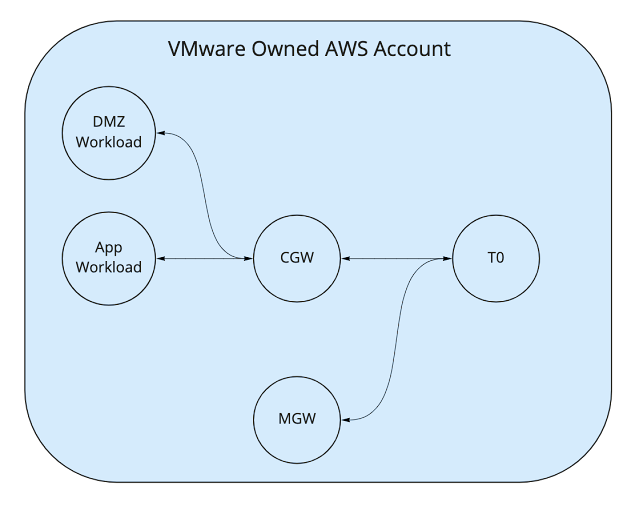

The long answer as to why this question comes up is that when we advertise the default route of 0.0.0.0/0 into the SDDC then all traffic from the SDDC will flow via on-premises, this includes traffic destined for the internet. Some customers prefer to do this to ensure all outbound internet traffic routes via their perimeter firewall so they can ensure that all security and logging policies are applied. The confusion comes around HCX. Since HCX is unable to use an existing IPSEC VPN tunnel to send traffic from on-premises into the SDDC as per the KB article it needs to establish its own. The HCX-IX Interconnect and HCX Network Extension Appliances both establish an IPSEC VPN tunnel from on-premises to their peer appliances in the SDDC using UDP/4500. So the question is, if we are advertising 0.0.0.0/0 into the SDDC so all traffic traverses the IPSEC VPN tunnel back to on-premises, then how can the HCX-IX Interconnect and HCX Network Extension Appliances communicate?