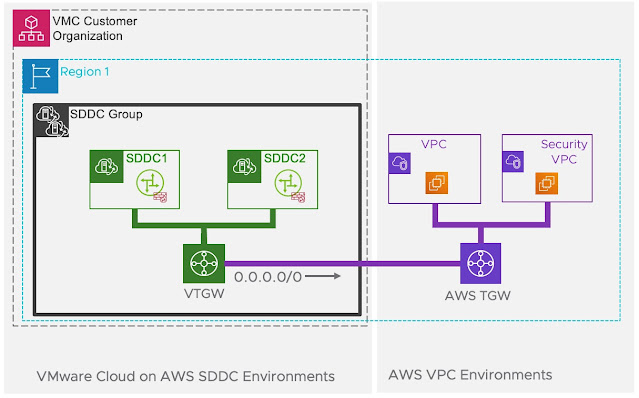

I recently had a query from a customer who was implementing intra-region peering between a VMware Transit Gateway and a native AWS Transit Gateway which would then be attached to a security VPC. Their requirement was to ensure that all VM connectivity from the SDDC would traverse the security VPC before egressing out to the internet or back to on-premises. This would require them to add a static route into the vTGW to point all traffic (0.0.0.0/0) to the peering attachment which connected the vTGW to the TGW. From there they would need to ensure the correct routing is in place on the TGW to send all traffic to the security VPC and also verify that the correct routes are in place for the reverse traffic:

This particular customer was looking to migrate out of an on-premises datacentre and going to utilise HCX to perform the migration. They also had a requirement to maintain IP addresses which required stretched layer 2 between on-premises and VMC on AWS. Since they were not licensed for the vSphere Distributed Switch we had to deployed the NSX Standalone edge for stretched layer 2.

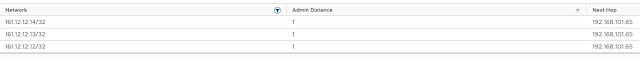

The customer had a few questions all based on what impact adding the default route into the vTGW to send all traffic to the TGW would have on the stretched layer 2 VPN tunnel as well as the HCX Interconnect tunnels. The short answer is nothing. The stretched layer 2 VPN and HCX will continue to function as long as they are configured to use the public IP addresses rather than the internal IP addresses. The reason this works is when you create a VPN (Layer 2, Policy or Routed based) we add a static route into the T0 for the remote public IP address with the next-hop being the Internet Gateway. Since this is a more specific route VPN traffic will continue to egress out of the Internet Gateway rather than take the default route to the vTGW. You can see below that I have created three VPN's with different remote public IPs:

If we then take a look at the T0 route table we can see three /32 routes for the remote public IPs we created above with the next-hop being the Internet Gateway:

HCX works differently in that the public IP addresses actually reside on the virtual appliances so we use a policy route to send traffic from the internet interface (If the external network profile was selected during the deployment of HCX) to the correct appliances and also for the return traffic.

The good news with all of this is that a vTGW can be safely integrated into your existing SDDC and can also potentially save on costs and latency since traffic does not have to traverse two Transit Gateways.

No comments:

Post a Comment